Recent Blog Posts

Five Years with Hugo

A look back at 5 years of experience publishing with Hugo.

During a break over Christmas 2020, I rebuilt this site, moving from WordPress to Hugo. After more than 5 years of publishing with Hugo, I’d like to share what I’ve learned, what’s worked, what hasn’t, and why for once, I’m happy with the platform I’m using.

This review builds on two recent articles, Five Hundred, a retrospective of 500 posts to this site, and Lessons Learned from 20 Years & Why You Should Blog, a look back at 20 years of publishing here, and the value of writing & blogging more generally. In this post, I will be diving into publishing with Hugo specifically, what’s good, what’s not, and what you should think about if you are considering it.

Read more…AI & IAM: Focus on Fundamentals

On the need to address fundamental issues when integrating new technologies.

A recent article, The Future of Cybersecurity Includes Non-Human Employees1, discussed the growing need to manage access granted to the rapidly expanding number of AI agents being deployed in companies. This is a deeply important topic, and particularly timely, as many are facing this challenge today. While I do want to address that topic, I also want to address how this is being framed.

Aside from leaning into an inflammatory tone with the use of “Non-Human Employees” as part of the title of the piece, there’s a deeper issue I see with how this is being framed, and it’s also related to AI. Importantly, this is far from unique to this article, but a trend across discussions of AI (and other new & emerging technologies). For the pragmatic security practitioner, a clear understanding of this framing device and the danger of accepting it unchallenged is of particular import.

Read more…On Privacy Nihilism

On the feeling of futility and the importance of action.

Amongst the steady stream of marketing emails for gift cards and other last minute gifts in the days before Christmas, buried in the noise sent when people are least likely to see it, was a notice. It was an all-too-familiar “we take your privacy seriously, but” email. Perfectly timed to make it clear that privacy wasn’t that important.

This wasn’t just my email address being leaked, this was everything. Name, address, income, employer, social security number. Each record stolen was essentially an identity theft kit; everything needed in one place. From a privacy and data security perspective, few things are worse.

Read more…Dynamic Social Media Images for Hugo

I’m a big fan of Hugo as a publishing platform, it’s the framework behind this site, and is incredibly flexible - if you are willing to invest the time and effort to make it truly yours. It’s fast, versatile, and has robust theming support. However, it’s also a static site generator, so doing anything dynamic means doing some extra work (as you have to do it at build time).

My life philosophy can be summed up to “work hard to be lazy” - in this case, that means I want a solution for social media sharing (OpenGraph) images that I will work without me needing to think about them again. This way, when a link is shared to Bluesky, Mastodon, LinkedIn, or other platforms, a reasonable image will be shown - even if I didn’t include an image for the article.

Read more…Lessons Learned from 20 Years & Why You Should Blog

Hard-won lessons from two decades of blogging, and why you should start your own.

Twenty years ago, I started publishing articles and essays here, and I recently published the 500th post to this site. After writing 267,897 words here and investing 2,100 hours into this site, I’ve learned a few things, made some mistakes, and I’d like to share some of these insights with you. Whether you are a veteran of the blogosphere or questioning if you should dip your toes in the waters (you should), I think you will find some useful information here.

Read more…Five Hundred

The 500th post: a look back, and a look ahead.

The year was 2006 when I registered

adamcaudill.comand set up WordPress to host this site. I had recently moved, started a new job as a software developer, and I wanted a new place to share thoughts, code, and the insight I was gathering along the way. I made the very first post.It will be 20 years, next month, since that first post, a short note from someone still finding his legs in the industry and far from finding his legs as a writer. Through the 2000s, the average length of the posts was only 240 words. Far from the long-windedness common in my more recent work.

Read more…Whose Monkeys Are These?

The 'Somebody Else's Problem' Problem in Leadership

Over the course of my career, I’ve found that there are some principles that are key for people and teams to be effective. One of these is that everything should have an owner. Everything should have someone that is responsible. Everything should have a designated person whose job it is to care about it. This might a be bug or vulnerability reports in software, it could be routine processes, or who responds to certain emails.

Read more…Is Long-form Writing Dead?

In a world where attention spans have been reduced to seconds, college students aren’t expected to read full books, AI is used to summarise anything more than a few sentences, and blogs have been largely replaced with microblogging platforms, is there still a place for long-form writing? In this essay I would like to explore that question; from how we got here to what hope we have for the future.

Read more…

Recent Security Research

Here you will find some of my articles on security research, vulnerabilities I've discovered, or exploits that I've crafted.

Exploiting the Jackson RCE: CVE-2017-7525

Breaking the NemucodAES Ransomware

PL/SQL Developer: HTTP to Command Execution

PL/SQL Developer: Nonexistent Encryption

Verizon Hum Leaking Credentials

Dovestones Software AD Self Password Reset (CVE-2015-8267)

Making BadUSB Work For You – DerbyCon

phpMyID: Fixing Abandoned OSS Software

Evernote for Windows, Arbitrary File Download via Update

VICIDIAL: Multiple Vulnerabilities

Insane Ideas

The Insane Ideas series is a group of ideas that are interesting, crazy, and are either amusing or such a bad idea, that they should never be pursued. These are not suggestions for something that should exist (unless you like crazy projects), but ideas that have interesting enough elements that they are worth discussing. Hopefully these are seen as amusing, and maybe serving as prior art should anyone else try these.

Fiction & Short Stories

I occasionally write fiction, and specifically short stories, often exploring the human experience, psychology, and emotion. These stories are experiments and explorations; these do not represent personal views, but serve as a method of studying complex topics.

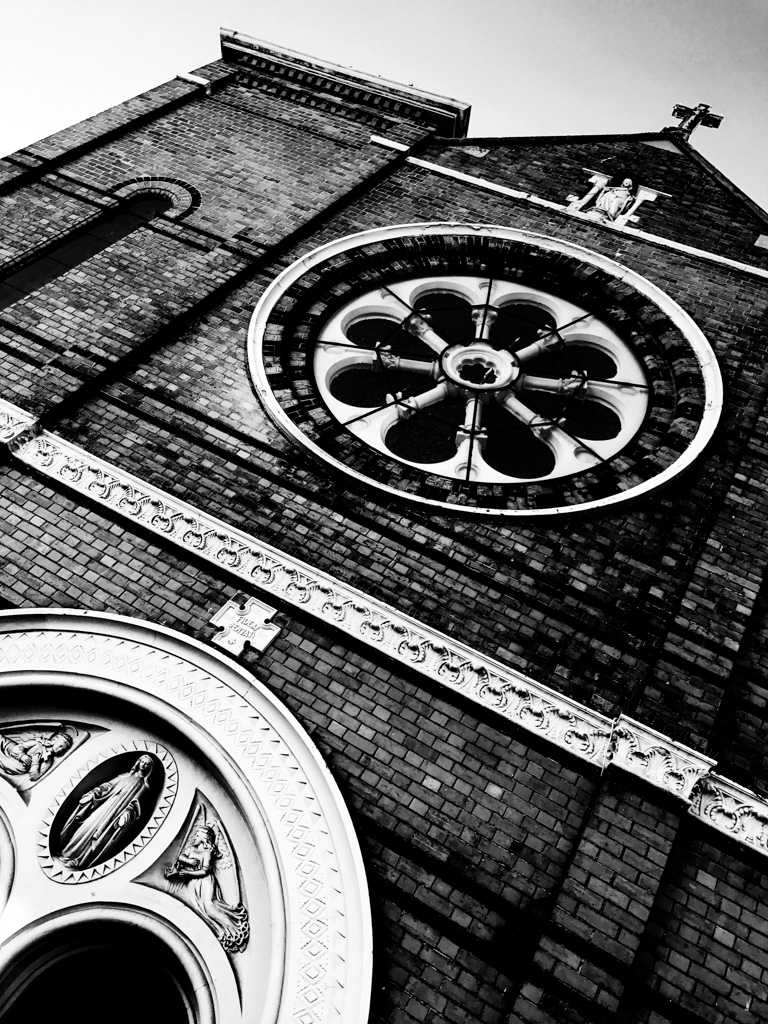

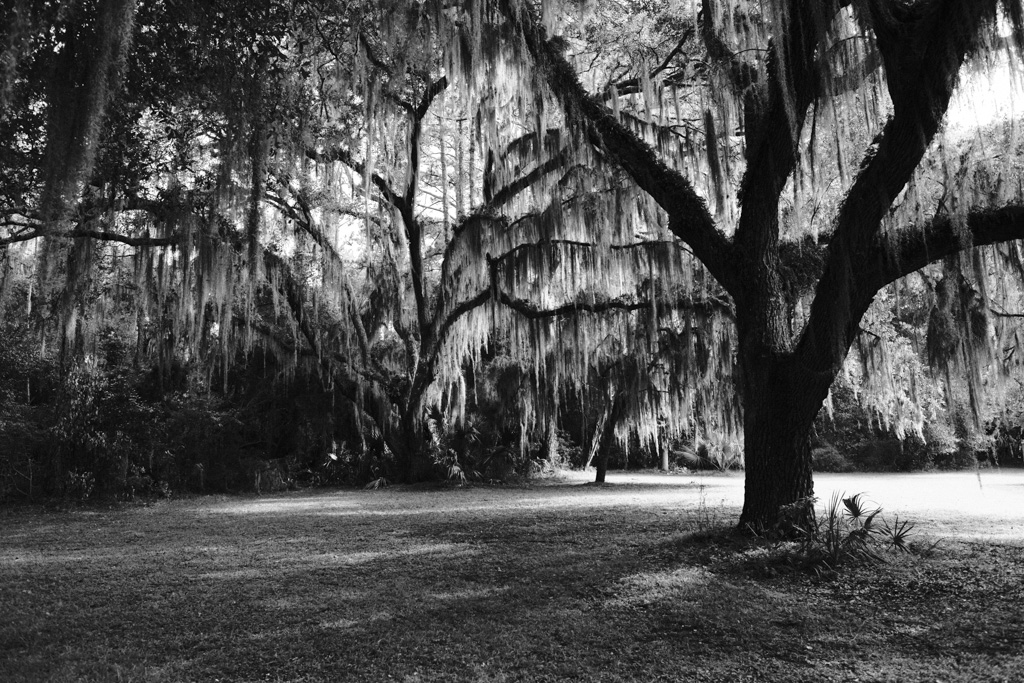

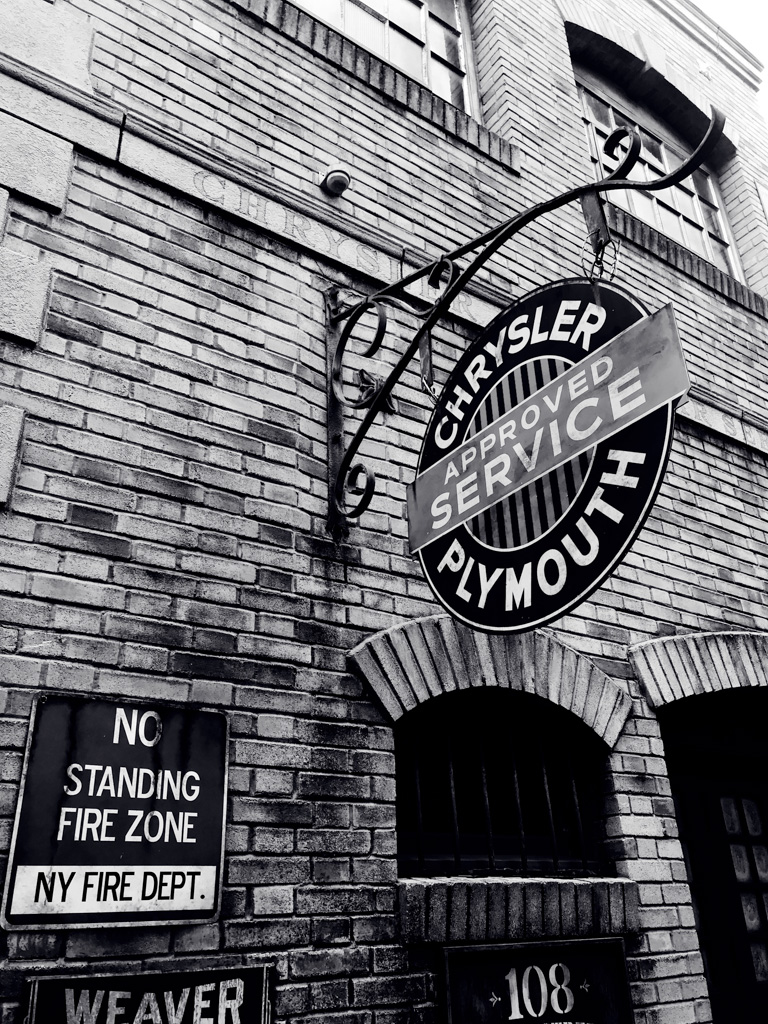

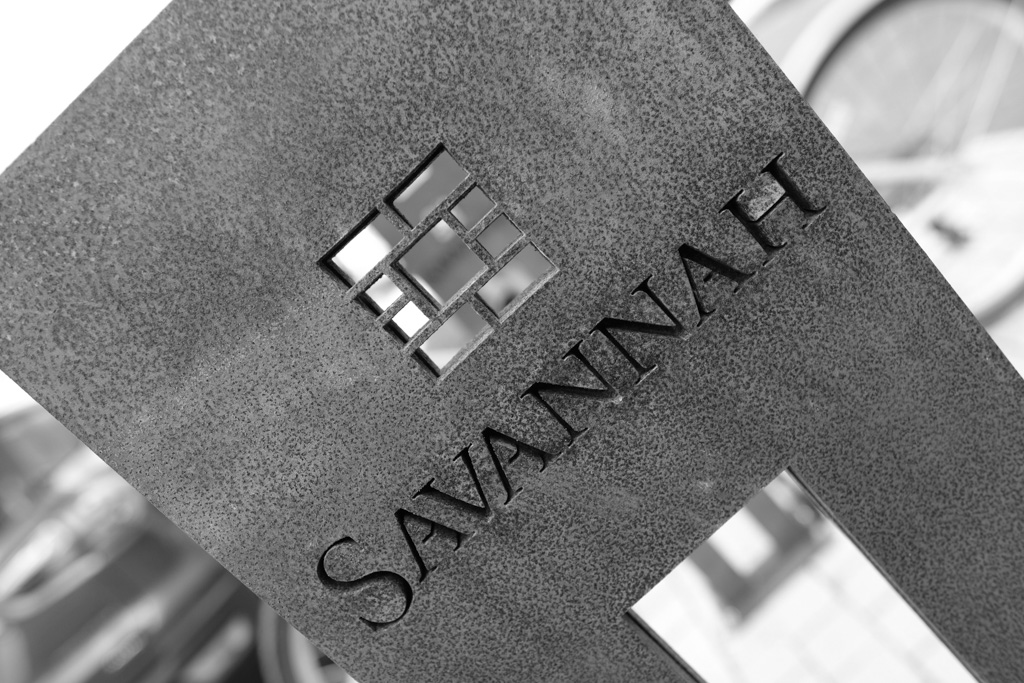

Fine Art Photography

About my Photography. | Buy Limited Edition Prints | My Photo Blog

Projects

- YAWAST - The YAWAST Antecedent Web Application Security Toolkit.

- libsodium-net - The .NET library for libsodium; a modern and easy-to-use crypto library.

- ccsrch - Cross-platform credit card (PAN) search tool for security assessments.

- Underhanded Crypto Contest - A competition to write or modify crypto code that appears to be secure, but actually does something evil.

About Adam Caudill

Adam Caudill is a security leader with over 20 years of experience in security and software development; with a focus on application security, secure communications, and cryptography. Active blogger, open source contributor, writer, photographer, and advocate for user privacy and protection. His work has been cited by many media outlets and publications around the world, from CNN to Wired and countless others.